Tokenization Stemming and Lemmatization Made Easy

04 May, 2026

3 Views 0 Like(s)

Learn tokenization stemming and lemmatization in simple terms and understand how AI processes human language effectively.

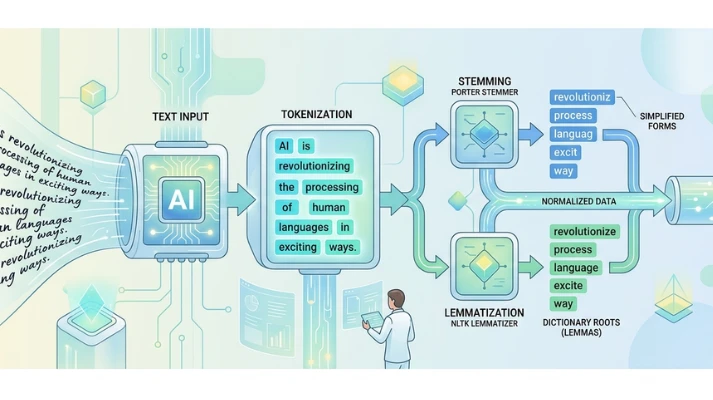

Understanding how machines process human language is a key part of Natural Language Processing. When you type a sentence into a search engine or chatbot, it does not understand the text the same way humans do. It first breaks the text into smaller pieces and simplifies them for analysis. These steps are called tokenization, stemming, and lemmatization, and they form the backbone of many AI systems. If you want to build strong foundational skills, you can consider enrolling in an Artificial Intelligence Course in Mumbai at FITA Academy to deepen your understanding through guided learning.

What is Tokenization

Tokenization refers to the method of dividing text into smaller components known as tokens. These tokens can be words, phrases, or even characters, depending on the task. For example, a sentence can be broken into individual words so that a machine can process each one separately. This step is important because raw text is difficult for machines to interpret. Tokenization helps structure the data in a way that algorithms can handle easily. It also serves an important function in organizing data for subsequent processing stages, such as stemming and lemmatization.

Understanding Stemming

Stemming is a method employed to simplify words to their base form. It removes prefixes and suffixes from words to simplify them. For example, words like running, runner, and runs may all be reduced to a common base form. This helps machines treat similar words as the same concept. However, stemming does not always produce real words, which can sometimes affect accuracy. Despite this limitation, it is widely used because it is fast and efficient. If you are interested in learning how such techniques are applied in real projects, you can explore options like an AI Course in Kolkata to gain practical exposure and hands-on experience.

What is Lemmatization

Lemmatization is a more advanced technique compared to stemming. It reduces words to their base or dictionary form, known as the lemma. Unlike stemming, lemmatization ensures that the output is a valid word. For example, the word better would be converted to good based on its meaning. This method uses vocabulary and grammar rules to produce accurate results. Although it requires more processing power, it provides better quality outcomes. Lemmatization is often preferred in applications where accuracy is more important than speed.

Key Differences Between Stemming and Lemmatization

Stemming focuses on cutting words down to a basic form without considering context. Lemmatization, on the other hand, takes meaning and grammar into account. This makes lemmatization more reliable but also more complex. Stemming is useful for quick tasks where speed matters. Lemmatization is better suited for applications like chatbots and search engines, where understanding context is important. Both techniques have their own advantages, and the choice depends on the specific use case.

Why These Techniques Matter

Tokenization, stemming, and lemmatization are essential for building efficient language models. They help reduce complexity and improve the performance of AI systems. Without these steps, machines would struggle to interpret large amounts of text data. These techniques are widely used in applications like search engines, recommendation systems, and virtual assistants. Learning these basics can open the door to more advanced topics in Artificial Intelligence.

In simple terms, tokenization breaks text into parts, stemming trims words to their roots, and lemmatization refines them into meaningful base forms. Together, they help machines understand and process human language more effectively. These concepts may seem simple, but they are powerful tools in the world of AI. If you are planning to build a career in this field, you can take the next step by joining AI Courses in Delhi to strengthen your skills and gain practical knowledge for future opportunities.

Also check: What is Backpropagation? The Algorithm Behind Neural Networks

Comments

Login to Comment