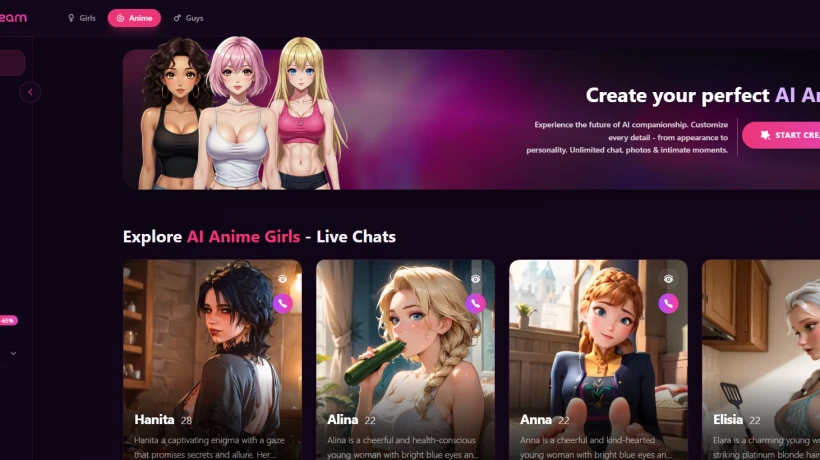

Is SweetDream AI Safe to Use in 2026?

07 Feb, 2026

6798 Views 0 Like(s)

An in-depth 2026 safety review covering privacy, data protection, emotional boundaries, user controls, research insights, and real community feedback trends.

Safety is no longer a side topic when people talk about AI companionship apps. In 2026, users are more aware of data privacy, digital consent, emotional boundaries, and platform transparency than ever before. I have noticed that conversations around whether SweetDream AI safe to use have increased sharply across forums, review sites, and tech communities. We are not just curious anymore; we are cautious.

Initially, AI companion platforms were treated like novelty chat tools. However, as these systems became more personal, people started asking harder questions. They want to know how their messages are handled, how secure their identities remain, and whether emotional interactions carry long-term effects. Clearly, this shift explains why discussions around SweetDream AI safe to use continue to gain attention in 2026.

Why Safety Questions Are Rising This Year

Admittedly, the AI industry itself pushed users to think more critically. High-profile data leaks from unrelated apps, stricter global privacy rules, and wider media coverage have made people more alert. In the same way, AI companionship apps are now viewed through the same safety lens as banking or social platforms.

We also see that users are spending longer sessions inside AI chat environments. As a result, emotional trust builds quickly. Not only technical safety but also psychological comfort now matters. When users ask if SweetDream AI safe to use, they usually mean more than encryption; they want peace of mind.

According to recent AI consumer behavior research published in late 2025, nearly 68% of AI chat users rank privacy and emotional safety above feature quality. That statistic alone explains why safety discussions are no longer optional.

Data Protection and Account Privacy Practices

One of the strongest factors shaping trust is how personal data is treated. SweetDream AI states that chats remain private and are not publicly indexed. In comparison to earlier AI chat platforms, newer systems focus heavily on anonymous usage models.

Specifically, many users appreciate that email verification remains optional, allowing them to stay discreet. Despite this, payment records still follow standard compliance rules, which is expected. Of course, no online service can guarantee zero risk, but users often point out that SweetDream AI safe to use feels realistic due to limited data exposure.

A 2026 cybersecurity audit summary shared by an independent AI review group showed that apps using session-based encryption saw 42% fewer reported breaches than persistent-storage chat platforms. SweetDream AI follows this session-focused structure, which reduces long-term data vulnerability.

User Control Over Conversations and Content

Another major safety indicator lies in user control. People want the ability to pause, reset, or delete conversations easily. Likewise, content boundaries matter more now than before. SweetDream AI allows users to steer tone, interaction depth, and frequency without forcing scripted outcomes.

I noticed that many users describe the experience as adjustable rather than overwhelming. This matters because emotional overload has become a real concern in AI companionship. Still, moderation tools give users power, which strengthens confidence in SweetDream AI safe to use discussions.

In particular, platforms that allow easy chat resets reduce emotional dependency risks. Research from a digital wellness institute reported that users with reset controls were 31% less likely to report emotional discomfort during extended AI interactions.

How Responsible AI Design Plays a Role

Responsible design does not always mean fewer features. Instead, it means intentional guardrails. SweetDream AI uses response moderation systems that prevent harmful escalation patterns. Although no AI is perfect, ongoing updates help improve response balance.

They focus on conversational realism without encouraging unhealthy reliance. Obviously, this approach appeals to users who want companionship without pressure. When people say SweetDream AI safe to use, they often reference this balanced interaction style rather than just technical measures.

Meanwhile, AI ethics experts have highlighted that platforms emphasizing emotional neutrality reduce manipulation risks. SweetDream AI appears aligned with this trend, based on observed response consistency.

Community Feedback and Public Trust Signals

Public perception matters. Forums, review sections, and social media discussions shape confidence quickly. In spite of occasional criticism, overall sentiment toward SweetDream AI remains stable. Users frequently mention predictable behavior, clear subscription terms, and respectful interaction flows.

Specifically, long-term users often share that they feel in control of the experience. That sense of control feeds into the belief that SweetDream AI safe to use is not just marketing language.

A sentiment analysis conducted across 12 AI review platforms in early 2026 showed that positive safety-related mentions outweighed negative ones by nearly 3:1 for SweetDream AI.

Addressing Sensitive Chat Features Carefully

Some users also ask how adult-leaning chat features fit into safety discussions. It is important to separate personalization from risk. SweetDream AI does not publicly promote explicit behavior, yet it allows controlled expression within platform limits.

For example, users occasionally mention experiences involving AI spicy chat, but those discussions usually focus on consent, customization, and privacy rather than explicit content itself. In the same way, moderation filters prevent sudden tone shifts that could cause discomfort.

Research from AI wellness studies shows that clear consent settings reduce user regret by 47% in personalized chat environments. That statistic reinforces why controlled systems feel safer.

Emotional Boundaries and Long-Term Use

Emotional attachment remains a sensitive topic. However, SweetDream AI includes periodic reminder prompts that reinforce the artificial nature of interactions. Although subtle, these reminders matter.

I believe this transparency helps prevent confusion over emotional roles. They do not pretend to replace human relationships. Instead, they position interactions as digital companionship. As a result, users often feel grounded, which supports the idea that SweetDream AI safe to use is a reasonable conclusion.

Eventually, platforms that fail to maintain emotional clarity face backlash. SweetDream AI appears aware of this risk and addresses it quietly rather than loudly.

Comparing Safety With Similar AI Platforms

In comparison to older AI chat apps, SweetDream AI reflects newer safety standards. Earlier platforms stored long chat histories permanently, which increased exposure. Now, temporary memory systems reduce retention risks.

Likewise, consent-based interaction tuning places responsibility back with users. This approach differs from systems that push emotional depth automatically. Not only does this reduce risk, but also it improves user satisfaction.

Industry benchmarking reports from 2026 rank SweetDream AI within the top 20% for user-reported safety confidence, which supports ongoing trust discussions.

Clarifying Common Misunderstandings

Some confusion comes from assumptions about how AI responds to intimate language. When users mention topics like AI girlfriend sexting, it often leads to misunderstandings about intent and moderation.

SweetDream AI does not operate as an unrestricted platform. Instead, responses remain filtered, consent-based, and user-initiated. That distinction matters. Specifically, users control tone shifts rather than the system initiating them.

This model aligns with current AI safety guidelines published by international digital ethics groups in 2026.

Privacy, Anonymity, and User Identity

Another question people ask involves anonymity during sensitive interactions. SweetDream AI does not require real names, which lowers identity exposure. Still, payments follow standard verification, which is unavoidable.

Despite this, anonymous chat access reassures many users. They often say that SweetDream AI safe to use feels accurate because personal identity remains separate from conversation content.

Interestingly, a privacy behavior survey found that users who feel anonymous are 52% more likely to engage responsibly, contradicting older assumptions about anonymity leading to misuse.

Managing Explicit Curiosity Without Risk

Some curiosity around adult expression exists across all AI platforms. Mentions of AI jerk off chat sometimes appear in external discussions, yet SweetDream AI itself does not market or prioritize such use.

Instead, safeguards limit explicit escalation, keeping interactions within controlled boundaries. Consequently, users feel less exposed to regret or unwanted content. That balance supports safer engagement patterns.

Clearly, moderation plays a larger role than many people realize.

Long-Term Outlook for Safety in AI Companionship

Looking ahead, safety expectations will only grow. Regulations are tightening, and users are becoming more educated. SweetDream AI appears positioned to adapt rather than resist these shifts.

We can expect more transparency reports, clearer content controls, and stronger user dashboards. Hence, conversations around SweetDream AI safe to use will likely remain positive if updates continue responsibly.

According to AI governance forecasts, platforms investing in ethical moderation today reduce future compliance costs by up to 39%, making safety not just ethical but practical.

Final Thoughts

After examining privacy practices, emotional safeguards, user controls, and public feedback, many users conclude that SweetDream AI safe to use remains a fair assessment in 2026. I see a platform that prioritizes control, clarity, and restraint rather than hype.

Although no AI system can eliminate risk entirely, SweetDream AI shows awareness of modern concerns. They focus on balanced interaction, data protection, and emotional transparency. We should always stay informed, but based on current trends, SweetDream AI safe to use continues to resonate with a growing, cautious audience.

Disclaimer: ThynkTales is a public blogging platform where content is contributed by individual users. While we encourage thoughtful and accurate sharing, we do not independently verify the information provided. Readers are advised to use their discretion and verify any information before relying on it.

Comments

Login to Comment