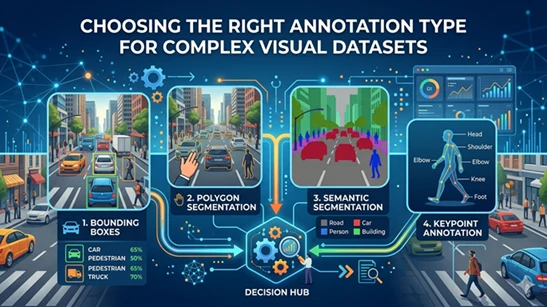

Choosing the Right Annotation Type for Complex Visual Datasets

10 Apr, 2026

189 Views 0 Like(s)

Choosing the right annotation type is crucial for complex visual datasets. Annotera helps businesses select precise labeling methods, from bounding boxes to segmentation, ensuring scalable, accurate AI model training and better performance.

In today’s AI-driven landscape, the success of any computer vision model depends heavily on the quality and relevance of its training data. For businesses working with complex visual datasets, selecting the right annotation type is one of the most critical decisions in the model development lifecycle. Whether the application involves autonomous driving, medical imaging, retail analytics, manufacturing, or satellite monitoring, the annotation strategy directly impacts model precision, scalability, and deployment efficiency.

At Annotera, we understand that no single annotation method fits every dataset. The complexity of visual data varies based on object shapes, density, overlap, movement, and the required output accuracy. This is why choosing the right annotation type must align with both project goals and model architecture. As a trusted data annotation company and image annotation company, we help businesses identify the most effective labeling strategy for high-performance AI systems.

Why Annotation Type Matters

Image annotation is more than simply marking objects in an image. It defines how a machine learning model interprets visual information. Poor annotation choices can lead to inaccurate predictions, increased retraining costs, and delays in production deployment.

For example, using bounding boxes for irregularly shaped objects like road signs hidden behind trees or tumors in medical scans may not provide the level of detail needed. In such cases, polygon annotation or semantic segmentation offers much higher precision.

The annotation type should always be selected based on:

- Object complexity

- Boundary precision requirements

- Dataset scale

- Model use case

- Budget and turnaround time

Common Annotation Types for Complex Visual Datasets

1. Bounding Box Annotation

Bounding box annotation is one of the most widely used methods in computer vision. It involves drawing rectangular boxes around target objects.

This method works best for:

- Object detection models

- Autonomous vehicle datasets

- Retail product detection

- Security surveillance

Bounding boxes are efficient and cost-effective, making them a preferred choice for projects requiring fast data annotation outsourcing. However, they may not be suitable for irregular shapes or overlapping objects where precision matters.

For example, identifying cars, pedestrians, and traffic signs in urban road scenes is often effectively managed using bounding boxes.

2. Polygon Annotation

When datasets contain objects with irregular boundaries, polygon annotation becomes essential. Instead of a rectangular box, annotators place multiple points around the object’s edges to create a precise outline.

This method is ideal for:

- Building footprint extraction

- Satellite imagery

- Medical imaging

- Agriculture datasets

- Complex product shapes

Polygon labeling provides better spatial accuracy, especially for models requiring fine-grained object recognition. For example, mapping the exact shape of buildings in aerial imagery requires polygon annotation rather than simple boxes.

As an experienced image annotation outsourcing partner, Annotera often recommends polygon labeling for datasets where object contours significantly influence prediction outcomes.

3. Semantic Segmentation

Semantic segmentation assigns a class label to every pixel in an image. This allows models to understand scene context at the pixel level.

This is commonly used for:

- Self-driving vehicles

- Medical diagnosis

- Land cover analysis

- Industrial defect detection

For instance, in autonomous driving datasets, every pixel may be labeled as road, pedestrian, vehicle, sky, or sidewalk. This enables detailed environmental understanding.

Semantic segmentation is best suited for highly complex visual datasets where contextual scene understanding is essential.

4. Instance Segmentation

While semantic segmentation labels all pixels of a class similarly, instance segmentation differentiates between individual objects of the same class.

For example, in a crowded street image, each pedestrian is separately labeled rather than grouped under one class.

This is useful for:

- Crowd analytics

- Cell counting in healthcare

- Manufacturing quality checks

- Robotics

For highly dense datasets, instance segmentation significantly improves model accuracy.

5. Keypoint Annotation

Keypoint annotation marks specific points of interest within an object.

This is widely used in:

- Facial recognition

- Human pose estimation

- Gesture recognition

- Sports analytics

Examples include marking joints such as elbows, knees, eyes, and shoulders for motion tracking models.

How to Choose the Right Annotation Type

Choosing the right method depends on project objectives.

Use Bounding Boxes When:

- Speed and scalability are priorities

- Objects are clearly separable

- Real-time detection is needed

Use Polygons When:

- Shapes are irregular

- High boundary accuracy is required

- Object contours affect predictions

Use Segmentation When:

- Pixel-level precision is needed

- Scene context matters

- Multiple overlapping classes exist

Use Keypoints When:

- Landmark detection is central

- Motion analysis is involved

At Annotera, our role as a leading data annotation company is to help businesses match annotation strategies with their AI use cases for optimal ROI.

The Role of Dataset Complexity

Complex visual datasets often include:

- Occluded objects

- Crowded scenes

- Variable lighting

- Motion blur

- Fine edge boundaries

These challenges require a thoughtful annotation workflow backed by domain expertise and rigorous QA.

For example, satellite imagery datasets with roads, buildings, trees, and vehicles may require a combination of polygon annotation and segmentation for best performance.

This is where professional data annotation outsourcing becomes valuable. Outsourcing to specialists like Annotera ensures consistency, scalability, and faster turnaround without compromising label quality.

Why Choose Annotera

As a trusted image annotation company, Annotera provides tailored annotation solutions for complex computer vision projects across industries.

Our strengths include:

- Domain-specific annotation expertise

- Multi-layer quality validation

- Scalable workforce

- Fast delivery cycles

- Custom annotation guidelines

Whether you need bounding boxes, polygons, segmentation, or keypoint labeling, our image annotation outsourcing services are designed to support enterprise AI teams at scale.

Conclusion

The right annotation type can significantly improve the performance of AI and machine learning models trained on complex visual datasets. From simple object detection to pixel-level segmentation, the choice must align with the dataset’s complexity and the model’s intended output.

At Annotera, we combine precision, scalability, and domain expertise to help businesses build smarter AI systems through reliable annotation workflows. As a dependable data annotation company and image annotation company, we ensure your visual data is transformed into high-value training datasets that drive real results.

Comments

Login to Comment