Accuracy Is Not Enough Understanding Metrics

17 Apr, 2026

10 Views 0 Like(s)

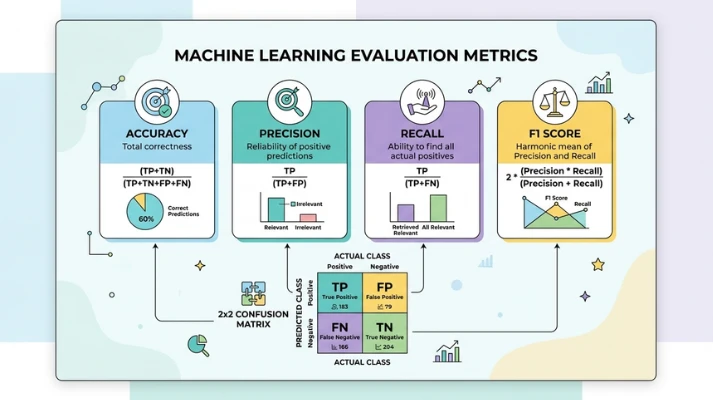

Learn why accuracy alone is not enough and explore key metrics like precision, recall, and F1 score for better model evaluation.

When building machine learning models, accuracy is often the first metric people look at. It feels simple and direct, showing how often a model makes correct predictions. However, relying only on accuracy can lead to misleading conclusions, especially when dealing with real-world data. Many problems require a deeper understanding of performance beyond just correct or incorrect outcomes. If you want to build strong foundational skills, enroll in a Data Science Course in Mumbai at FITA Academy to strengthen your practical understanding.

Why Accuracy Can Be Misleading

Accuracy assesses the proportion of correct predictions compared to the total predictions made. While this sounds useful, it does not always reflect true model performance. For example, in a dataset where one class dominates, a model can achieve high accuracy by simply predicting the majority class every time.

This becomes a serious issue in cases like fraud detection or medical diagnosis, where the minority class is more important. A model might show 95 percent accuracy but still fail to detect critical cases. This is why accuracy alone cannot capture the full picture of model effectiveness.

Understanding Precision and Recall

To go beyond accuracy, it is important to understand precision and recall. Precision indicates the proportion of predicted positive cases that are actually accurate. Recall indicates the number of actual positive cases that were accurately recognized.

These two metrics highlight different aspects of model performance. Precision focuses on correctness, while recall focuses on completeness. In many applications, there is a trade-off between them. Learning how to balance these metrics is essential for building reliable models, and if you want structured guidance, you can consider enrolling in a Data Science Course in Kolkata to deepen your understanding.

The Role of F1 Score

The F1 score incorporates precision and recall into a single metric. It offers a balanced assessment when both false positives and false negatives are significant. This is particularly advantageous when dealing with imbalanced datasets.

Instead of focusing on just one metric, the F1 score helps evaluate how well the model performs overall. It encourages a more thoughtful approach to model evaluation and reduces the risk of relying on misleading results.

Beyond Basic Metrics

There are other important evaluation tools, such as confusion matrices and ROC curves. A confusion matrix shows how predictions are distributed across different classes. It helps identify specific types of errors made by the model.

ROC curves and AUC scores provide insight into how well a model distinguishes between classes. These metrics are useful when comparing multiple models and selecting the best one. By exploring these tools, you gain a clearer understanding of how your model behaves in different scenarios.

Choosing the Right Metric

The choice of metric depends on the problem you are solving. For example, in spam detection, false positives might be more annoying than false negatives. In medical diagnosis, missing a disease can be far more serious than a false alarm.

This means there is no one-size-fits-all metric. You must align your evaluation method with the impact of your model. Grasping the context enables you to make more informed choices and develop models that genuinely provide value.

Accuracy is a useful starting point, but it is not enough to evaluate a model effectively. By understanding metrics like precision, recall, and F1 score, you can gain a more complete view of performance. This leads to better models and more reliable outcomes. If you are looking to build these skills in a structured way, you can consider taking a Data Science Course in Delhi to advance your learning journey.

Also check: How Models Learn An Intuitive Introduction

Comments

Login to Comment