Optimization Techniques That Power Modern Models

22 Apr, 2026

4 Views 0 Like(s)

Learn how optimization techniques improve model accuracy and power modern data science applications in a simple and clear way.

Optimization lies at the heart of every successful data science model. It is the process that helps models learn patterns by adjusting their internal parameters step by step. Without proper optimization, even the most advanced algorithms fail to deliver accurate results. Understanding how optimization works can give beginners a strong foundation in machine learning. If you are looking to build practical skills, you can consider enrolling in a Data Science Course in Trivandrum at FITA Academy to gain structured guidance and hands-on experience.

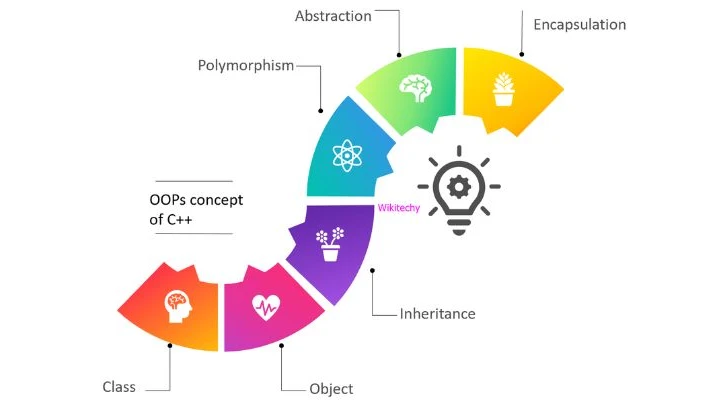

What is Optimization in Data Science

Optimization involves the process of either minimizing or maximizing a function to attain the most favorable result. In machine learning, this usually means reducing the error between predicted and actual values. The model learns by continuously updating its parameters based on this error. Each update brings the model closer to accurate predictions. This process may sound complex, but it follows a simple idea of learning from mistakes and improving gradually.

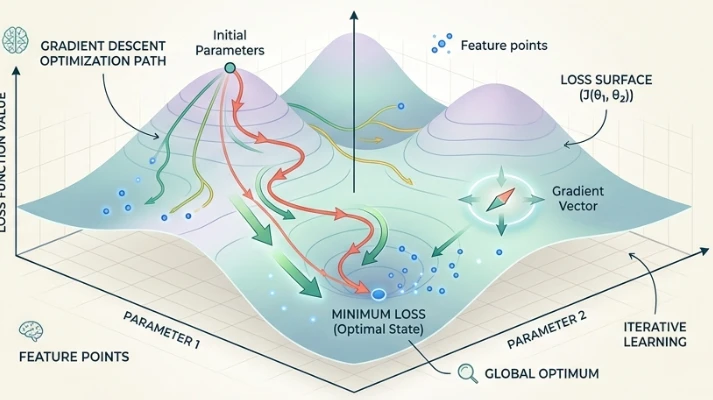

Gradient Descent and Its Variants

One of the most commonly used optimization techniques is gradient descent. It works by calculating the slope of the error function and moving in the opposite direction to reduce the error. This method is simple yet powerful, making it widely used across different models. Variants like stochastic gradient descent and mini-batch gradient descent improve efficiency and speed. These variations help handle large datasets and make training more practical. For learners who want to explore these concepts in depth, taking a Data Science Course in Kochi can provide clarity through guided practice and real-world examples.

Learning Rate and Convergence

The rate of learning is an essential factor in the optimization process. It determines how big each step should be while updating the model parameters. An excessively high learning rate might lead the model to overlook the best solution, while an excessively low rate could hinder the speed of the learning process. Achieving the appropriate balance is crucial for seamless convergence. Convergence refers to the point where the model reaches a stable solution with minimal error. Proper tuning of the learning rate helps achieve faster and more reliable results.

Advanced Optimization Techniques

Modern models often use advanced optimization methods to improve performance. Techniques like momentum help accelerate learning by considering past updates. Adaptive methods such as Adam and RMSprop adjust the learning rate automatically for each parameter. These approaches make optimization more efficient and stable. They are particularly beneficial when handling intricate datasets and advanced deep learning models. Understanding these techniques can help beginners appreciate how modern systems achieve high accuracy.

Challenges in Optimization

Optimization is not always straightforward and comes with its own challenges. Problems like local minima can prevent the model from finding the best solution. Overfitting is another issue where the model performs well on training data but poorly on new data. Regularization techniques and proper validation can help address these challenges. Developing an intuition for these problems is important for building reliable models.

Optimization techniques are the driving force behind modern data science models. They enable systems to learn from data and improve over time. By understanding these methods, beginners can build a strong base for advanced topics. Consistent practice and real-world exposure make a significant difference in mastering optimization. If you want to strengthen your skills further, consider signing up for a Data Science Course in Pune to continue your learning journey with expert support.

Also check: Sources of Data and How to Choose the Right One

Comments

Login to Comment